https://itinai.com/sequential-niah-benchmarking-llms-for-sequential-information-extraction-from-long-texts/

Sequential-NIAH: A Benchmark for Evaluating LLMs in Extracting Sequential Information from Long Texts

Introduction

As businesses increasingly rely on large language models (LLMs) for processing extensive text data, evaluating their ability to extract relevant information from long contexts becomes crucial. Recent advancements in LLMs, such as Gemini-1.5 and GPT-4, have expanded context lengths significantly, but challenges remain in accurately retrieving specific information from lengthy inputs.

Understanding the Need for Sequential-NIAH

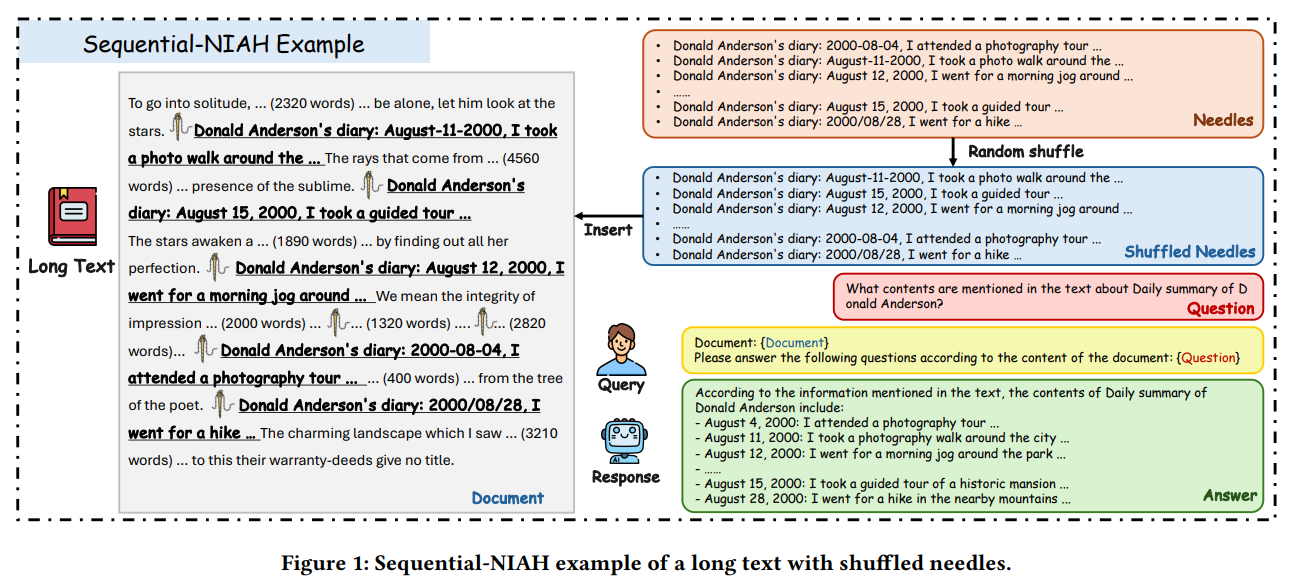

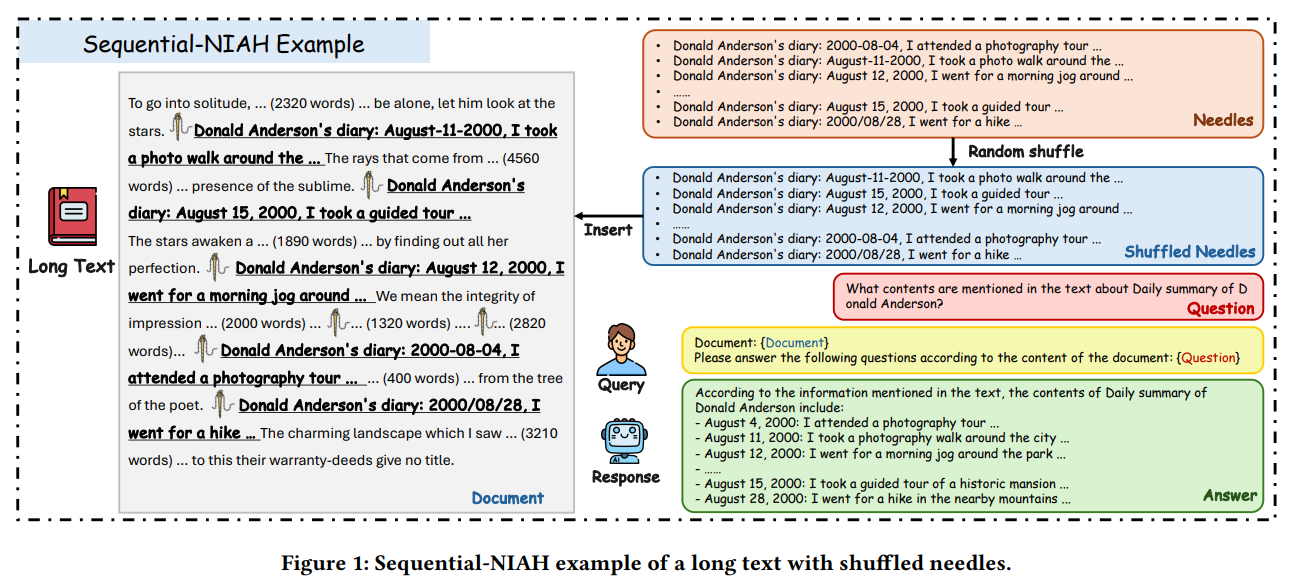

Traditional benchmarks like ∞Bench and LongBench have focused on general context length capabilities but often overlook the nuanced task of retrieving critical information from predominantly irrelevant content. The Sequential-NIAH benchmark addresses this gap by evaluating how effectively LLMs can extract sequentially ordered information, referred to as “needles,” from long texts, or “haystacks.”

Historical Context and Benchmark Evolution

Earlier benchmarks, such as RULER and Counting-Stars, provided simplistic setups for information retrieval. NeedleBench improved upon these by incorporating semantically meaningful tasks. However, the need for benchmarks that assess the retrieval and correct ordering of sequential information remains unmet.

Key Features of Sequential-NIAH

The Sequential-NIAH benchmark includes:

- Types of QA Synthesis: It utilizes synthetic, real, and open-domain question-answering pipelines to create diverse scenarios.

- Sample Size: The benchmark comprises 14,000 samples, segmented into training, development, and testing sets in both English and Chinese.

- Evaluation Model: A synthetic data-trained model achieved an impressive 99.49% accuracy in assessing the correctness and order of responses.

Performance Insights

In tests involving popular LLMs, the highest accuracy achieved was 63.15% by Gemini-1.5, indicating the complexity of the task. Notably, performance varied based on text length, the number of needles, and the QA synthesis pipeline used. A noise analysis revealed that while minor changes had little impact, significant shifts in needle positions affected model consistency.

Case Study: Model Comparisons

In a comparative analysis of LLMs, the Sequential-NIAH benchmark demonstrated that:

- Claude-3.5 achieved 87.09% accuracy.

- GPT-4o reached 96.07% accuracy.

- Gemini-1.5 led with 63.15% accuracy in subsequent tests.

Practical Business Solutions

To leverage the insights from the Sequential-NIAH benchmark, businesses can:

- Automate Processes: Identify areas where AI can streamline operations, particularly in customer interactions.

- Measure Impact: Establish key performance indicators (KPIs) to evaluate the effectiveness of AI investments.

- Select Appropriate Tools: Choose AI tools that align with business objectives and allow for customization.

- Start Small: Implement AI in manageable projects, analyze results, and gradually expand its application.

Conclusion

The Sequential-NIAH benchmark is a significant advancement in evaluating LLMs’ capabilities in extracting sequential information from lengthy texts. With 14,000 samples and a focus on diverse QA synthesis, it highlights the challenges faced by current models, none of which achieved high accuracy in practical tests. This benchmark not only aids in advancing LLM research but also provides valuable insights for businesses looking to harness AI effectively.

https://itinai.com/sequential-niah-benchmarking-llms-for-sequential-information-extraction-from-long-texts/

#SequentialNIAH #LLMBenchmarking #InformationExtraction #AIResearch #DataInsights

No comments:

Post a Comment