https://itinai.com/create-a-custom-mcp-client-with-gemini-step-by-step-guide/

Creating a Custom Model Context Protocol (MCP) Client Using Gemini

This guide will walk you through the process of developing a custom Model Context Protocol (MCP) Client using Gemini. By the end, you will be equipped to connect your AI applications with MCP servers, enhancing your project capabilities significantly.

1. Setting Up Dependencies

Gemini API

We will utilize the Gemini 2.0 Flash model for this tutorial. To obtain your Gemini API key, visit the official Gemini page and follow the provided instructions. Ensure you store this key securely, as it will be needed later.

N Installation

Some MCP servers require the N runtime to operate. Download the latest version of N from the official site and run the installer, keeping all settings at their defaults.

National Park Services API

In this tutorial, we will connect to the National Park Services MCP server. To access the National Park Service API, request an API key by filling out a short form on their website. The key will be sent to your email, so keep it handy for later use.

Installing Python Libraries

Open your command prompt and enter the following command to install the necessary Python libraries:

pip install mcp python-dotenv google-genai

2. Configuring Files

Creating Configuration File

Create a file named config.json to store the configuration details for the MCP servers your client will connect to. Add the following initial content:

{

"mcpServers": {

"nationalparks": {

"command": "npx",

"args": ["-y", "mcp-server-nationalparks"],

"env": {

"NPS_API_KEY": "your_api_key_here"

}

}

}

}

Replace your_api_key_here with the key you generated earlier.

Creating .env File

In the same directory, create a .env file and enter the following code:

GEMINI_API_KEY=your_gemini_api_key_here

Again, replace your_gemini_api_key_here with your actual key.

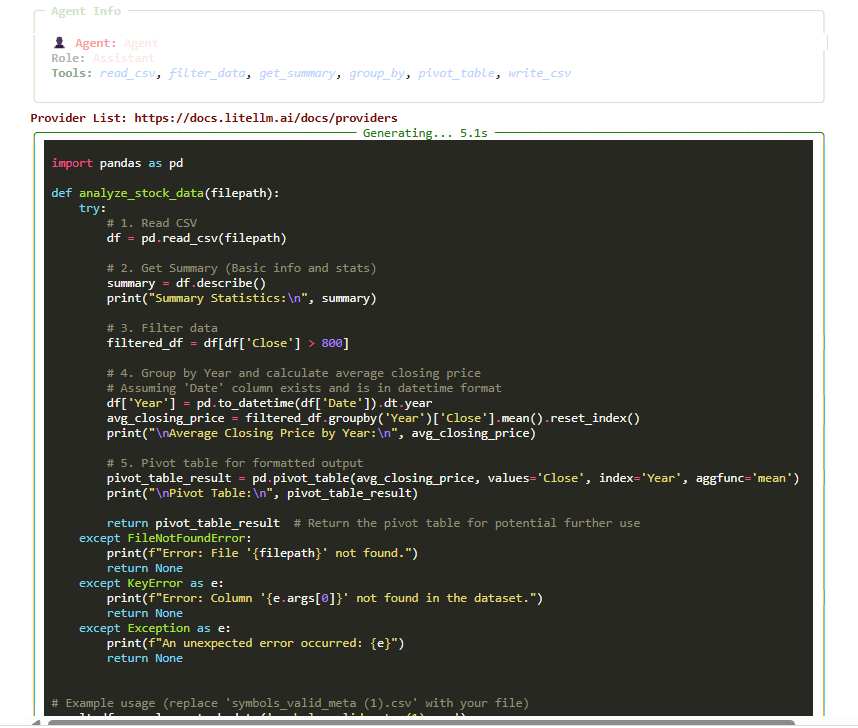

3. Implementing the MCP Client

Basic Client Structure

Next, create a file to implement your MCP Client. Ensure this file is in the same directory as config.json and .env.

Begin by importing the necessary libraries and creating a basic client class:

import asyncio

import json

import os

from typing import List, Optional

from contextlib import AsyncExitStack

from google import genai

from mcp import ClientSession, StdioServerParameters

from dotenv import load_dotenv

load_dotenv()

Connecting to the MCP Server

Implement a method to connect to the selected MCP server:

async def connect(self):

await self.select_server()

_transport = await stdio_client(self.r_params)

self.session = await ClientSession(self.r_params)

await self.session.initialize()

print(f"Successfully connected to: {self.r_name}")

Handling User Queries

Develop a method to handle user queries and tool calls:

async def agent_loop(self, prompt: str) -> str:

contents = [types.Content(role="user", parts=[types.Part(text=prompt)])]

response = await self.process_content(contents)

return response

Interactive Chat Loop

Create an interactive chat loop for user engagement:

async def chat(self):

print(f"\nMCP-Gemini Assistant is ready and connected to: {self.r_name}")

while True:

query = input("\nYour query: ").strip()

if query.lower() == 'quit':

print("Session ended. Goodbye!")

break

print("Processing your request...")

res = await self.agent_loop(query)

print(f"\nGemini's answer: {res}")

4. Running the Client

To run your client, execute the following command in your terminal:

python your_client_file.py

Your client will:

- Read the configuration file to list available MCP servers.

- Prompt the user to select a server.

- Connect to the selected MCP server using the provided settings.

- Interact with the Gemini model through user queries.

- Provide a command-line interface for real-time engagement.

- Ensure proper cleanup of resources after the session.

Conclusion

By following this guide, you have successfully created a custom MCP Client using Gemini. This client allows you to connect to various MCP servers, enhancing your AI applications’ capabilities. As businesses increasingly adopt AI technologies, understanding how to implement and manage these systems is crucial for maintaining a competitive edge. For further assistance in integrating AI into your business processes, feel free to reach out to us.

https://itinai.com/create-a-custom-mcp-client-with-gemini-step-by-step-guide/

#Gemini #MCPClient #AIIntegration #StepByStepGuide #TechTutorial